IT Careers: Planning Your Future When the Future Is Uncertain

Right now, a lot of businesses are operating in crisis mode so they're

prioritizing cost control out of necessity. Some of those companies will make

staff cuts across the board to be "fair." Others realize that because the

future is increasingly digital, they'll need to make cuts with a scalpel

rather than an axe. Those companies are taking inventory of the skills they

have and are comparing that with what they'll need to survive and thrive in

the short term and over the long term. "Managing experts and navigating those

who live in silos is one of the most challenging and vexing issues of our

day," said Vikram Mansharamani ... Mansharamani also recommends planning for

several possible futures as opposed to "the future," which is the same advice

major consulting firms are providing client companies. In both cases it's wise

to do scenario planning for each possible circumstance. "There's a lack of

understanding of what the range of possibilities is," said Mansharamani. "A

lot of people have thought of career paths as climbing corporate ladders,

which I think is wrong." Instead, it might be wiser at times to make a lateral

move in order to shift one's career to a different track. Alternatively, one

might consider what appears to be a temporary digression as part of a

longer-term strategy.

The Future Will Be Both Agile and Hardened

In short, IT became agile but security did not. Then the pandemic hit, which

put our situation into stark relief. Overnight, we went from a 10% to 20%

remote workforce to more than 90% remote. In a hot second, business continuity

became something we did, not something we met about. Peter was robbed and Paul

was paid as we diverted budget, changed priorities, and stood up VPNs and

reconfigured networks to allow remote access to our critical systems. In a few

frenetic weeks, we put many assumptions to the test and learned a lot. Many of

our legacy on-premises applications simply aren't elastic enough to support

this new remote workforce. Our massive overnight changes shed new light on our

security's worst enemy — human error — as system misconfigurations skyrocketed

to record highs, leaving us exposed. Predictably, bad actors saw opportunity

in the pandemic and took advantage. Now what? As the weeks turn to months,

it's increasingly clear that there is no going back. As Satya Nadella, CEO of

Microsoft, recently noted, "We've seen two years of digital transformation in

two months."

16 Tech Experts Weigh In On The Potential Of Edge Computing

Edge computing has big implications for machine learning. While training a

machine learning model can be very data-intensive and may require the scale of

public cloud infrastructure, inference and prediction can be pushed to edge

devices. This means that inference and prediction can be accomplished at the

edge, close to where new data is collected. - Sean Maday, Google ... Edge AI

is where edge computing and artificial intelligence come together to provide

intelligence to the edge. This is the next gold mine. There is a lot of

innovation happening at the edge in terms of low power technology—for example,

the way DNN training is done with reinforcement agents. It is this innovation

that will bring a revolution to such industries as precision medicine,

Industry 4.0 and Intelligent IoT. - Shailesh Manjrekar, WekaIO ... Edge

computing will play a key role for companies looking to get ahead in the

experience economy. Core benefits like low latency, scalability and security

create superior digital experiences. Adoption has been hindered without a

standard set of tools to build and deploy edge-enabled apps, but once these

emerge, edge computing will transform business and digital services across all

verticals. - Kris Beevers, NS1

The second wave of fintech disruption: three trends shaping the future of payments

Fortunately, we are standing on the cusp of fintech’s second major wave of disruption – and this one is going to be the real game-changer. Products, processes and ways of working are designed for digital and, crucially, have payments technology embedded in the user experience from start to finish. If you call an Uber, for example, you never think about the payment – you just request a ride, get in and get out. It’s completely frictionless. Why, then, can we not have that experience in everything we do? When online shopping, sites typically ask me for different information, deliver varying experiences and operate payments in a range of ways. As a consumer that’s frustrating, often confusing and encourages me to take my money elsewhere. Extrapolating services like payments and re-bundling them into the tech stack will help consumer-facing companies overcome many of these issues and provide a far better experience to their customers. Digital wallets will be at the heart of this change. They are the enabling technology that will allow payments to sit in the background, independent of the banking system, making everything more seamless.

Exploding Security Perimeter, Remote Worker Ramp Spotlights SD-WAN Limits

while it’s certainly possible to deploy SD-WAN hardware to every employee, it

isn’t always economically or operationally feasible, let alone necessary.

Instead, many enterprises are scaling up their use of virtual private networks

(VPNs), already used by remote workers, to meet demand. This approach,

however, isn’t without challenges, said Fortinet CMO John Maddison, in an

interview with SDxCentral. A typical enterprise with 10,000 employees might

have had 1,000 workers who needed remote access to the data center, he said.

With the onset of the pandemic, “suddenly everybody in the company needs SSL

VPN access.” “A lot of our customers actually were able to spin up a

teleworker solution very quickly,” Maddison said. Fortinet’s enterprise and

data center firewalls, which feature purpose-built security ASICs, can support

tens of thousands of concurrent VPN tunnels, which is something Maddison says

few others can achieve. “Most of our customers were able to switch on almost

10x worth of SSL VPN in the data center without a drop for their systems,” he

said. “A lot of systems, that our competitors have, had a lot of problems

because it was just doing that in CPU or through a standalone system.”

3 common misconceptions about PCI compliance

The first misconception primarily impacts vendors. It’s the misconception that

just because a piece of equipment doesn’t process or transmit credit card

data, it’s not in the scope of PCI. This simply isn’t true. There are

essentially two types of systems in scope. One type is any system that

directly touches credit card information. The second is any outlying larger

connected systems that touch the first type of system. ... The second

misconception involves what PCI compliance fundamentally tries to protect.

While the PCI DSS guidelines have good recommendations for general security,

they’re specifically trying to protect payment-related information. If you’re

implementing the controls well, they do a solid job of increasing overall

security. But at the end of the day, the scope is intentionally narrow. That’s

why one of the biggest issues I see companies struggling with is how to

adequately define their card data environment (CDE). Getting the scope right

for CDE is the most essential thing you can do, and everything else builds on

top of that. This is where understanding the card data flow comes into play.

You must be able to articulate how a credit card transaction is created and

transmitted from beginning to end.

Amazon puts one-year moratorium on police use of facial recognition software

Much of the dispute over police departments using it boils down to the

confidence threshold that users set for Rekognition. After the study from

Buolamwini and Raji made headlines, Amazon repeatedly said in documents that

all police departments should use it at a 95% threshold. Police departments

have already said they do not do this, with most using the software at the 80%

threshold that the program is set to at first. All of the studies done by

researchers use the 80% threshold as the benchmark. Despite the issues with

Rekognition, Amazon has openly sold it widely to police departments and

security forces across the world. The company tried to sell the program to the

Immigration and Custom Enforcement agency but will not say officially how many

police departments are using the software. When pressed on the issue in

February, CEO of Amazon's Web Services Andy Jassy told PBS company officials

would stop any police department from using Rekognition if they found out it

was being misused, but the company has released no further information about

how this would work or how they would even know how a police department was

using it.

The ten competitive technology-driven influencers for 2020

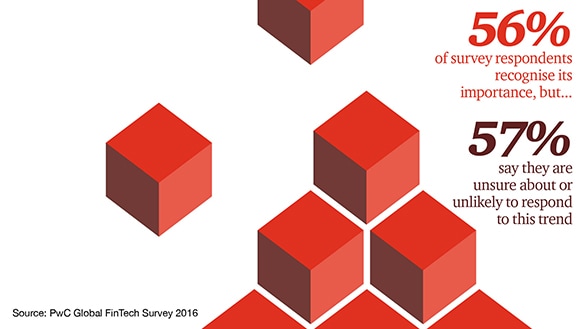

FinTech disruptors have been finding a way in. Disruptors are fast-moving

companies, often start-ups, focused on a particular innovative technology or

process in everything from mobile payments to insurance. And, they have been

attacking some of the most profitable elements of the financial services value

chain. This has been particularly damaging to the incumbents who have

historically subsidized important but less profitable service offerings. In

our recent PwC Global FinTech Survey, industry respondents told us that a

quarter of their business, or more, could be at risk of being lost to

standalone FinTech companies within 5 years. ... Around the world, the middle

class is projected to grow by 180% between 2010 and 2040; Asia’s middle class

is already larger than Europe’s. By 2020, the majority share of the population

considered “middle class” is expected to shift from North America and Europe

to Asia-Pacific. And over the next 30 years, some 1.8 billion people will move

into cities, mostly in Africa and Asia, creating one of the most important new

opportunities for financial institutions. These trends are directly linked to

technology-driven innovation.

What is NLP? Why does your business need an NLP based chatbot?

When it comes to Natural Language Processing, developers can train the bot on

multiple interactions and conversations it will go through as well as

providing multiple examples of content it will come in contact with as that

tends to give it a much wider basis with which it can further assess and

interpret queries more effectively. So, while training the bot sounds like a

very tedious process, the results are very much worth it. Royal Bank of

Scotland uses NLP in their chatbots to enhance customer experience through

text analysis to interpret the trends from the customer feedback in multiple

forms like surveys, call center discussions, complaints or emails. It helps

them identify the root cause of the customer’s dissatisfaction and help them

improve their services according to that. ... NLP based chatbots can help

enhance your business processes and elevate customer experience to the next

level while also increasing overall growth and profitability. It provides

technological advantages to stay competitive in the market-saving time, effort

and costs that further leads to increased customer satisfaction and increased

engagements in your business.

State at the Edge: An Interview with Peter Bourgon

Arguably the hardest part of distributed systems is dealing with faults.

Computers are ephemeral, networks are unreliable, topologies change — the

fallacies of distributed computing are well-known, and accommodating them tends

to dominate the engineering effort of successful systems. And if your system is

managing state, things get much more difficult: maintaining a useful consistency

model for users requires extremely careful coordination, with stronger

consistency typically demanding commensurate effort. This inevitably corresponds

to more bugs and less reliability. CRDTs, or conflict-free replicated data

types, are a relatively novel state primitive that give us a way to skirt around

a lot of this complexity. I think of them as carefully constructed data types,

each combined with a specific set of operations. Over-simplifying, if you make

sure the operations are associative, commutative, and idempotent, then CRDTs

allow you to apply them in any order, including with duplicates, and get the

same, deterministic results at the end. Said another way, CRDTs have built-in

conflict resolution, so you don’t have to do that messy work in your

application.

Quote for the day:

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/66921575/VRG_ILLO_4062_WFH_with_SPOT_2040.0.0.jpg)