Quote for the day:

“A bad system will beat a good person every time.” -- W. Edwards Deming

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 22 mins • Perfect for listening on the go.

World Backup Day warnings over ransomware resilience gaps

World Backup Day 2026 serves as a critical reminder of the widening gap

between traditional backup strategies and the sophisticated demands of modern

ransomware resilience. Industry experts emphasize that many organizations are

failing to evolve their recovery plans alongside increasingly complex,

fragmented cloud environments spanning AWS, Azure, and SaaS platforms. A major

concern highlighted is the tendency for businesses to treat backups as a

narrow IT task rather than a foundational pillar of security governance.

Statistics from incident response specialists reveal a troubling reality: over

half of organizations experience backup failures during significant breaches,

and nearly 84% lack a single survivable data copy when first facing an attack.

Experts warn that standard native tools often lack the unified visibility and

immutability required to withstand malicious encryption or intentional

destruction by threat actors. To address these vulnerabilities, the article

advocates for a shift toward "breach-informed" recovery orchestration, which

includes rigorous, real-world scenario testing and the reduction of internal

"blast radiuses." Ultimately, as ransomware attacks surge by over 50%

annually, the message is clear: simple data replication is no longer

sufficient. True resilience requires a continuous, holistic approach that

integrates people, processes, and hardened technology to ensure data is not

just stored, but truly recoverable under extreme pressure.

World Backup Day 2026 serves as a critical reminder of the widening gap

between traditional backup strategies and the sophisticated demands of modern

ransomware resilience. Industry experts emphasize that many organizations are

failing to evolve their recovery plans alongside increasingly complex,

fragmented cloud environments spanning AWS, Azure, and SaaS platforms. A major

concern highlighted is the tendency for businesses to treat backups as a

narrow IT task rather than a foundational pillar of security governance.

Statistics from incident response specialists reveal a troubling reality: over

half of organizations experience backup failures during significant breaches,

and nearly 84% lack a single survivable data copy when first facing an attack.

Experts warn that standard native tools often lack the unified visibility and

immutability required to withstand malicious encryption or intentional

destruction by threat actors. To address these vulnerabilities, the article

advocates for a shift toward "breach-informed" recovery orchestration, which

includes rigorous, real-world scenario testing and the reduction of internal

"blast radiuses." Ultimately, as ransomware attacks surge by over 50%

annually, the message is clear: simple data replication is no longer

sufficient. True resilience requires a continuous, holistic approach that

integrates people, processes, and hardened technology to ensure data is not

just stored, but truly recoverable under extreme pressure.APIs are the new perimeter: Here’s how CISOs are securing them

The rapid proliferation of application programming interfaces (APIs) has

fundamentally shifted the cybersecurity landscape, making them the new

organizational perimeter. As traditional endpoint protections and web

application firewalls struggle to detect sophisticated business-logic abuse,

Chief Information Security Officers (CISOs) are adapting their strategies to

address this expanding attack surface. The rise of generative AI and

autonomous agentic systems has further exacerbated risks by enabling low-skill

adversaries to exploit vulnerabilities and automating high-speed interactions

that can bypass legacy defenses. To counter these threats, security leaders

are implementing robust governance frameworks that include comprehensive API

inventories to eliminate "shadow APIs" and integrating automated security

validation directly into CI/CD pipelines. A critical component of this modern

defense is a shift toward identity-aware security, prioritizing the management

of non-human identities and service accounts through least-privilege access.

Furthermore, CISOs are centralizing third-party credential management and

utilizing specialized API gateways to enforce consistent security policies

across diverse cloud environments. By treating APIs as critical business

infrastructure rather than mere plumbing, organizations can maintain

visibility and control, ensuring that every integration is threat-modeled and

continuously monitored for behavioral anomalies in an increasingly

interconnected and AI-driven digital ecosystem.

The rapid proliferation of application programming interfaces (APIs) has

fundamentally shifted the cybersecurity landscape, making them the new

organizational perimeter. As traditional endpoint protections and web

application firewalls struggle to detect sophisticated business-logic abuse,

Chief Information Security Officers (CISOs) are adapting their strategies to

address this expanding attack surface. The rise of generative AI and

autonomous agentic systems has further exacerbated risks by enabling low-skill

adversaries to exploit vulnerabilities and automating high-speed interactions

that can bypass legacy defenses. To counter these threats, security leaders

are implementing robust governance frameworks that include comprehensive API

inventories to eliminate "shadow APIs" and integrating automated security

validation directly into CI/CD pipelines. A critical component of this modern

defense is a shift toward identity-aware security, prioritizing the management

of non-human identities and service accounts through least-privilege access.

Furthermore, CISOs are centralizing third-party credential management and

utilizing specialized API gateways to enforce consistent security policies

across diverse cloud environments. By treating APIs as critical business

infrastructure rather than mere plumbing, organizations can maintain

visibility and control, ensuring that every integration is threat-modeled and

continuously monitored for behavioral anomalies in an increasingly

interconnected and AI-driven digital ecosystem.Q&A: What SMBs Need To Know About Securing SaaS Applications

In this BizTech Magazine interview, Shivam Srivastava of Palo Alto Networks

highlights the critical need for small to medium-sized businesses (SMBs) to

secure their Software as a Service (SaaS) environments as the web browser

becomes the modern workspace’s primary operating system. With SMBs typically

managing dozens of business-critical applications, they face significant risks

from visibility gaps, misconfigurations, and the rising threat of AI-powered

attacks, which hit smaller firms significantly harder than large enterprises.

Srivastava emphasizes that traditional antivirus solutions are insufficient in

this browser-centric era, particularly when employees use unmanaged devices or

accidentally leak sensitive data into generative AI tools. To mitigate these

risks, he advocates for a "crawl, walk, run" strategy that prioritizes the

adoption of a secure browser as the central command center for security. This

approach allows businesses to fulfill their side of the shared responsibility

model by protecting the "last mile" where users interact with data. By

implementing secure browser workspaces, multi-factor authentication, and AI

data guardrails, SMBs can establish a manageable yet highly effective defense.

As the landscape evolves toward automated AI agents and app-to-app

integrations, centering security on the browser ensures that small businesses

remain protected against the next generation of automated, browser-based

threats.

In this BizTech Magazine interview, Shivam Srivastava of Palo Alto Networks

highlights the critical need for small to medium-sized businesses (SMBs) to

secure their Software as a Service (SaaS) environments as the web browser

becomes the modern workspace’s primary operating system. With SMBs typically

managing dozens of business-critical applications, they face significant risks

from visibility gaps, misconfigurations, and the rising threat of AI-powered

attacks, which hit smaller firms significantly harder than large enterprises.

Srivastava emphasizes that traditional antivirus solutions are insufficient in

this browser-centric era, particularly when employees use unmanaged devices or

accidentally leak sensitive data into generative AI tools. To mitigate these

risks, he advocates for a "crawl, walk, run" strategy that prioritizes the

adoption of a secure browser as the central command center for security. This

approach allows businesses to fulfill their side of the shared responsibility

model by protecting the "last mile" where users interact with data. By

implementing secure browser workspaces, multi-factor authentication, and AI

data guardrails, SMBs can establish a manageable yet highly effective defense.

As the landscape evolves toward automated AI agents and app-to-app

integrations, centering security on the browser ensures that small businesses

remain protected against the next generation of automated, browser-based

threats.Developers Aren't Ignoring Security - Security Is Ignoring Developers

The article "Developers Aren’t Ignoring Security, Security is Ignoring Developers" on DEVOPSdigest argues that the traditional disconnect between security teams and developers is not due to developer negligence, but rather a failure of security processes to integrate with modern engineering workflows. The central premise is that developers are fundamentally committed to quality, yet they are often hindered by security tools that prioritize "gatekeeping" over enablement. These tools frequently generate excessive false positives, leading to alert fatigue and friction that slows down delivery cycles. To bridge this gap, the author suggests that security must "shift left" not just in timing, but in mindset—moving away from being a final hurdle to becoming an automated, invisible part of the development lifecycle. This involves implementing security-as-code, providing actionable feedback within the Integrated Development Environment (IDE), and ensuring that security requirements are defined as clear, achievable tasks rather than abstract policies. Ultimately, the piece contends that for DevSecOps to succeed, security professionals must stop blaming developers for gaps and instead focus on building developer-centric experiences that make the secure path the path of least resistance.Beyond the Sandbox: Navigating Container Runtime Threats and Cyber Resilience

In the article "Beyond the Sandbox: Navigating Container Runtime Threats and

Cyber Resilience," Kannan Subbiah explores the evolving landscape of

cloud-native security, emphasizing that traditional "Shift Left" strategies

are no longer sufficient against 2026’s sophisticated runtime threats. Unlike

virtual machines, containers share the host kernel, creating an inherent

"isolation gap" that attackers exploit through container escapes, poisoned

runtimes, and resource exhaustion. To bridge this gap, Subbiah advocates for

advanced isolation technologies such as Kata Containers, gVisor, and

Confidential Containers, which provide hardware-level protection and secure

data in use. Central to building a "digital immune system" is the

implementation of cyber resilience strategies, including eBPF for deep kernel

observability, Zero Trust Architectures that prioritize service identity, and

immutable infrastructure to prevent configuration drift. Furthermore, the

article highlights the increasing importance of regulatory compliance,

referencing global standards like NIST SP 800-190, the EU’s DORA and NIS2, and

Indian frameworks like KSPM. Ultimately, the author argues that true

resilience requires shifting from a "fortress" mindset to an automated,

proactive approach where containers are continuously monitored and secured

against the volatility of the runtime environment, ensuring robust defense in

a high-density, multi-tenant cloud ecosystem.

In the article "Beyond the Sandbox: Navigating Container Runtime Threats and

Cyber Resilience," Kannan Subbiah explores the evolving landscape of

cloud-native security, emphasizing that traditional "Shift Left" strategies

are no longer sufficient against 2026’s sophisticated runtime threats. Unlike

virtual machines, containers share the host kernel, creating an inherent

"isolation gap" that attackers exploit through container escapes, poisoned

runtimes, and resource exhaustion. To bridge this gap, Subbiah advocates for

advanced isolation technologies such as Kata Containers, gVisor, and

Confidential Containers, which provide hardware-level protection and secure

data in use. Central to building a "digital immune system" is the

implementation of cyber resilience strategies, including eBPF for deep kernel

observability, Zero Trust Architectures that prioritize service identity, and

immutable infrastructure to prevent configuration drift. Furthermore, the

article highlights the increasing importance of regulatory compliance,

referencing global standards like NIST SP 800-190, the EU’s DORA and NIS2, and

Indian frameworks like KSPM. Ultimately, the author argues that true

resilience requires shifting from a "fortress" mindset to an automated,

proactive approach where containers are continuously monitored and secured

against the volatility of the runtime environment, ensuring robust defense in

a high-density, multi-tenant cloud ecosystem.AI-first enterprises must treat data privacy as architecture, not an afterthought

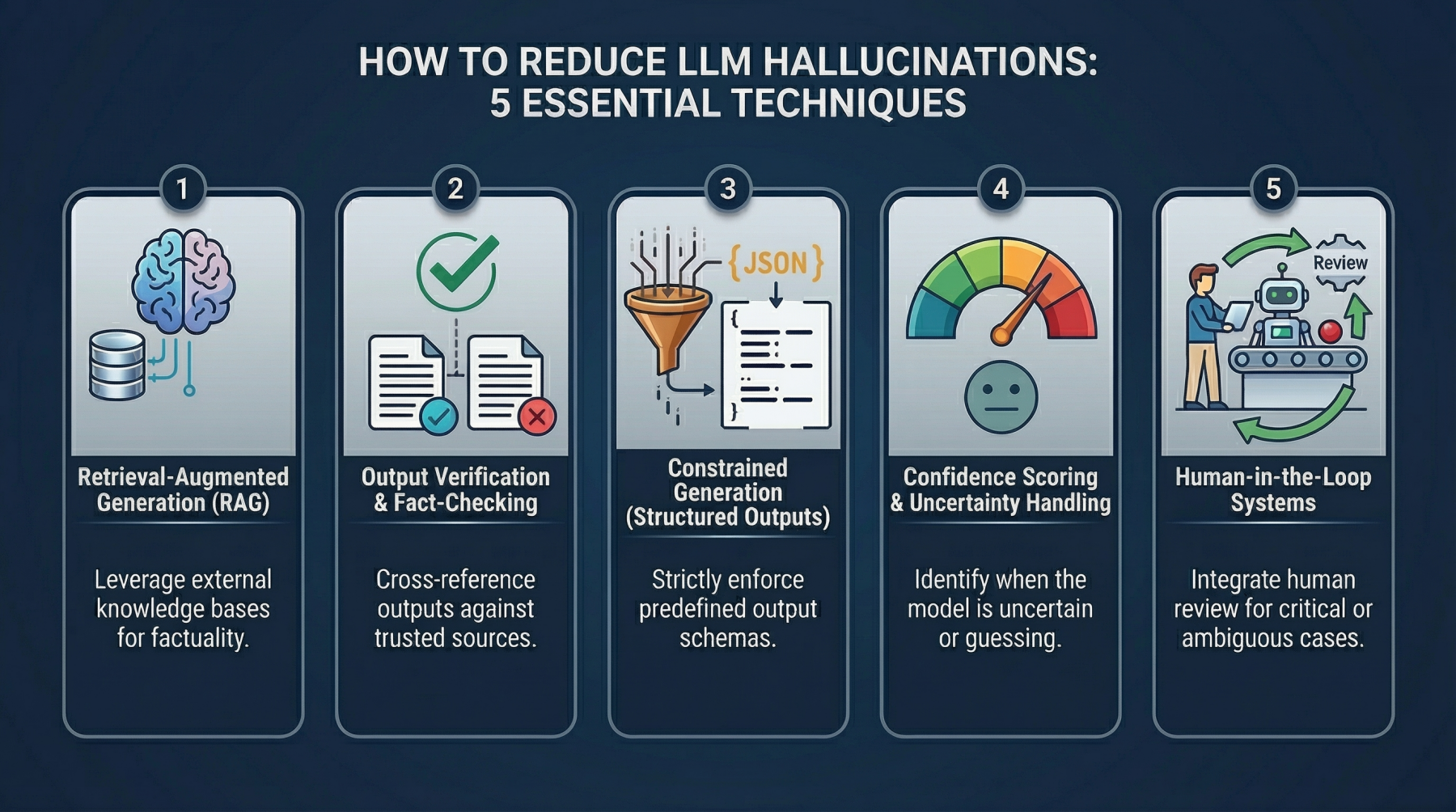

The Balance Between AI Speed and Human Control

The article "The Balance Between AI Speed and Human Control" explores the

critical tension between rapid technological advancement and the necessity of

human oversight. It argues that issues like AI hallucinations are often

inherent design consequences of prioritizing fluency and speed over safety

safeguards. Currently, global governance is fragmented: the European Union

emphasizes rigid regulation, the United States favors innovation with limited

accountability, and India seeks a middle path focusing on deployment scale.

However, each model faces significant challenges, such as algorithmic bias or

systemic failures. The author suggests moving toward a "copilot" framework

where AI serves as decision support rather than an autocrat. This requires

implementing three interconnected architectural pillars: impact-aware

modeling, context-grounded reasoning, and governed escalation with explicit

thresholds for human intervention. As artificial general intelligence develops

incrementally, nations must shift from treating human judgment as a bottleneck

to viewing it as a vital safeguard. Ultimately, the goal is to harmonize

efficiency with empathy, ensuring that technological progress does not come at

the cost of moral accountability or human potential. By adopting binding

technical standards for human overrides in consequential decisions, society

can ensure that AI remains a tool for empowerment rather than an uncontrolled

force.

The article "The Balance Between AI Speed and Human Control" explores the

critical tension between rapid technological advancement and the necessity of

human oversight. It argues that issues like AI hallucinations are often

inherent design consequences of prioritizing fluency and speed over safety

safeguards. Currently, global governance is fragmented: the European Union

emphasizes rigid regulation, the United States favors innovation with limited

accountability, and India seeks a middle path focusing on deployment scale.

However, each model faces significant challenges, such as algorithmic bias or

systemic failures. The author suggests moving toward a "copilot" framework

where AI serves as decision support rather than an autocrat. This requires

implementing three interconnected architectural pillars: impact-aware

modeling, context-grounded reasoning, and governed escalation with explicit

thresholds for human intervention. As artificial general intelligence develops

incrementally, nations must shift from treating human judgment as a bottleneck

to viewing it as a vital safeguard. Ultimately, the goal is to harmonize

efficiency with empathy, ensuring that technological progress does not come at

the cost of moral accountability or human potential. By adopting binding

technical standards for human overrides in consequential decisions, society

can ensure that AI remains a tool for empowerment rather than an uncontrolled

force.

Securing agentic AI is still about getting the basics right

As agentic AI workflows transform the enterprise landscape, Sam Curry, CISO of Zscaler, emphasizes that robust security remains grounded in fundamental principles. Speaking at the RSAC 2026 Conference, Curry highlights a major shift toward silicon-based intelligence, where AI agents will eventually conduct the majority of internet transactions. This evolution necessitates a renewed focus on two primary pillars: identity management and runtime workload security. Unlike traditional methods, securing these agents requires sophisticated frameworks like SPIFFE and SPIRE to ensure rigorous identification, verification, and authentication. Organizations must implement granular authorization controls and zero-trust architectures to contain risks, such as autonomous agent sprawl or unauthorized data access. Furthermore, while automation can streamline governance and compliance, Curry warns that security in adversarial environments still requires human judgment to counter unpredictable threats. Ultimately, the successful deployment of agentic AI depends on mastering the basics—cleaning infrastructure, establishing clear accountability, and ensuring auditability. By treating AI agents as distinct identities within a segmented network, businesses can foster innovation without sacrificing security. This balanced approach ensures that as technology advances, the underlying security architecture remains resilient against emerging threats in a world increasingly dominated by autonomous digital entities.Can Your Bank’s IT Meet the Challenge of Digital Assets?

The article from The Financial Brand examines the "side-core" (or sidecar)

architecture as a transformative solution for traditional banks seeking to

integrate digital assets and stablecoins into their operations. Traditional

banking core systems are often decades old and technically incapable of

supporting the high-precision ledgers—often requiring eighteen decimal

places—and the 24/7/365 real-time settlement demands of blockchain-based

assets. Rather than attempting a costly and risky "rip-and-replace" of these

legacy cores, financial institutions are increasingly adopting side-cores:

modern, cloud-native platforms that run in parallel with the main system. This

specialized architecture allows banks to issue tokenized deposits, manage

stablecoins, and facilitate instant cross-border payments while maintaining

their established systems for traditional functions. By leveraging a

side-core, banks can rapidly deploy crypto-native services, attract younger

demographics, and secure new deposit streams without significant operational

disruption. The article highlights that as regulatory clarity improves through

frameworks like the GENIUS Act, the ability to operate these dual systems will

become a key competitive advantage for regional and community banks.

Ultimately, the side-core approach provides a modular path toward

modernization, allowing traditional institutions to remain relevant in an era

defined by programmable finance and digital-native commerce.

The article from The Financial Brand examines the "side-core" (or sidecar)

architecture as a transformative solution for traditional banks seeking to

integrate digital assets and stablecoins into their operations. Traditional

banking core systems are often decades old and technically incapable of

supporting the high-precision ledgers—often requiring eighteen decimal

places—and the 24/7/365 real-time settlement demands of blockchain-based

assets. Rather than attempting a costly and risky "rip-and-replace" of these

legacy cores, financial institutions are increasingly adopting side-cores:

modern, cloud-native platforms that run in parallel with the main system. This

specialized architecture allows banks to issue tokenized deposits, manage

stablecoins, and facilitate instant cross-border payments while maintaining

their established systems for traditional functions. By leveraging a

side-core, banks can rapidly deploy crypto-native services, attract younger

demographics, and secure new deposit streams without significant operational

disruption. The article highlights that as regulatory clarity improves through

frameworks like the GENIUS Act, the ability to operate these dual systems will

become a key competitive advantage for regional and community banks.

Ultimately, the side-core approach provides a modular path toward

modernization, allowing traditional institutions to remain relevant in an era

defined by programmable finance and digital-native commerce.

Everything You Think Makes Sprint Planning Work, Is Slowing Your Team Down!

In his article, Asbjørn Bjaanes argues that traditional Sprint Planning "best

practices"—such as assigning work and striving for accurate

estimation—actually undermine team agility by stifling ownership and clarity.

He identifies several key pitfalls: first, leaders who assign stories strip

developers of their internal sense of control, turning owners into compliant

executors. Instead, teams should self-select work to foster initiative.

Second, estimation should be viewed as an alignment tool rather than a

forecasting exercise; "estimation gaps" are vital opportunities to surface

hidden complexities and synchronize mental models. Third, the author warns

against mid-sprint interruptions and automatic story rollovers. Rolling over

unfinished work without scrutiny ignores shifting priorities and cognitive

biases, while unplanned additions break the sanctity of the team’s commitment.

Furthermore, Bjaanes emphasizes that a Sprint Backlog without a clear,

singular goal is merely a "to-do list" that leaves teams directionless under

pressure. Ultimately, real improvement requires shifting underlying beliefs

about control and trust rather than simply refining process steps. By

embracing healthy disagreement during planning and protecting the team’s

autonomy, organizations can move beyond mere compliance toward true high

performance, ensuring that planning serves as a strategic compass rather than

an administrative burden.

In his article, Asbjørn Bjaanes argues that traditional Sprint Planning "best

practices"—such as assigning work and striving for accurate

estimation—actually undermine team agility by stifling ownership and clarity.

He identifies several key pitfalls: first, leaders who assign stories strip

developers of their internal sense of control, turning owners into compliant

executors. Instead, teams should self-select work to foster initiative.

Second, estimation should be viewed as an alignment tool rather than a

forecasting exercise; "estimation gaps" are vital opportunities to surface

hidden complexities and synchronize mental models. Third, the author warns

against mid-sprint interruptions and automatic story rollovers. Rolling over

unfinished work without scrutiny ignores shifting priorities and cognitive

biases, while unplanned additions break the sanctity of the team’s commitment.

Furthermore, Bjaanes emphasizes that a Sprint Backlog without a clear,

singular goal is merely a "to-do list" that leaves teams directionless under

pressure. Ultimately, real improvement requires shifting underlying beliefs

about control and trust rather than simply refining process steps. By

embracing healthy disagreement during planning and protecting the team’s

autonomy, organizations can move beyond mere compliance toward true high

performance, ensuring that planning serves as a strategic compass rather than

an administrative burden.

/articles/architecting-autonomy-scale/en/smallimage/architecting-autonomy-scale-thumbnail-1774360140662.jpg)

/articles/architectural-governance-ai-speed/en/smallimage/architectural-governance-ai-speed-thumbnail-1773997111820.jpg)